For a long time, cybersecurity in the private sector followed a simple rule: defend, but do not pursue. Organisations were expected to build stronger defenses, such as firewalls, antivirus systems, internal monitoring tools, and absorb or deflect attacks within their own networks. Crossing that boundary, even to stop an ongoing attack, was largely off-limits. Actions such as infiltrating an attacker’s system or disrupting their infrastructure, often described as “hacking back”, were broadly prsohibitedunder both domestic and international law.

That model is now under strain. Cyber threats have evolved faster than the legal frameworks designed to contain them. State-sponsored operations, ransomware groups and advanced persistent threats operate at a scale and speed that passive defenses alone struggle to match. As a result, policymakers and security practitioners are increasingly turning toward what is often called Active Cyber Defense (ACD): a spectrum of proactive measures that sit somewhere between traditional defense and outright offensive cyber operations.

This shift is not just technical, it is legal, political and ethical. As jurisdictions begin to cautiously expand what defenders are allowed to do, a new set of risks emerges. In particular, the growing use of autonomous systems to execute defensive actions raises difficult questions about accountability, attribution and control. What was once a technical best practice (i.e., carefully orchestrating cyber responses) is quickly becoming a legal necessity.

The Limits of Passive Defense

The traditional model of cybersecurity rests on containment. Organisations secure their own systems and respond to threats that cross into their networks. This approach aligns neatly with legal principles: it respects boundaries, avoids interfering with external systems, and minimises the risk of unintended harm.

But attackers do not respect those same constraints. Modern cyber operations are distributed, anonymised and often routed through compromised third-party infrastructure. A ransomware attack, for example, may originate from servers spread across multiple jurisdictions, many of which belong to innocent actors. This asymmetry creates a structural disadvantage. Defenders are confined to their own networks, while attackers operate globally and opportunistically.

As attacks become more automated and persistent, the idea of simply “holding the line” becomes less viable. ACD emerges as a response to this imbalance. It includes measures such as deception technologies, threat intelligence gathering and, in more controversial cases, limited disruption of attacker infrastructure. The key distinction is that ACD moves beyond passive observation toward proactive interference with malicious activity. Yet this is precisely where legal complications begin.

The Legal Baseline: Why “Hacking Back” Remains Problematic

In many jurisdictions, the line between defense and offense is still sharply drawn. In the United States, for instance, the Computer Fraud and Abuse Act (CFAA) continues to define the legal boundary. At its core, the CFAA prohibits unauthorisedaccess to computer systems. This prohibition applies regardless of intent: accessing an attacker’s system even to stop an ongoing attack or recover stolen data can constitute a criminal offence.

Attempts have been made to soften this position. The proposed Active Cyber Defense Certainty Act (ACDC) sought to create a limited safe harbour for private entities engaging in carefully defined defensive actions outside their networks. These included activities such as attributing attacks, monitoring adversaries and disrupting ongoing intrusions under strict conditions.

However, these efforts have not succeeded. The hesitation is not merely bureaucratic; it reflects deeper concerns about risk. The most significant of these is misattribution. Cyber attackers routinely disguise their origins by routing operations through compromised systems. A company attempting to “hack back”could easily target infrastructure that belongs to an innocent third party, such as a hospital, a cloud provider or even another government. The consequences could range from civil liability to international escalation.

There is also the question of proportionality. Without clear limits, defensive actions could quickly resemble offensive cyber operations, blurring the line between private security and vigilantism. For these reasons, most legal systems continue to treat external active defense, particularly anything resembling hacking back, as either prohibited or, at best, legally ambiguous.

International Law and the Problem of Sovereignty

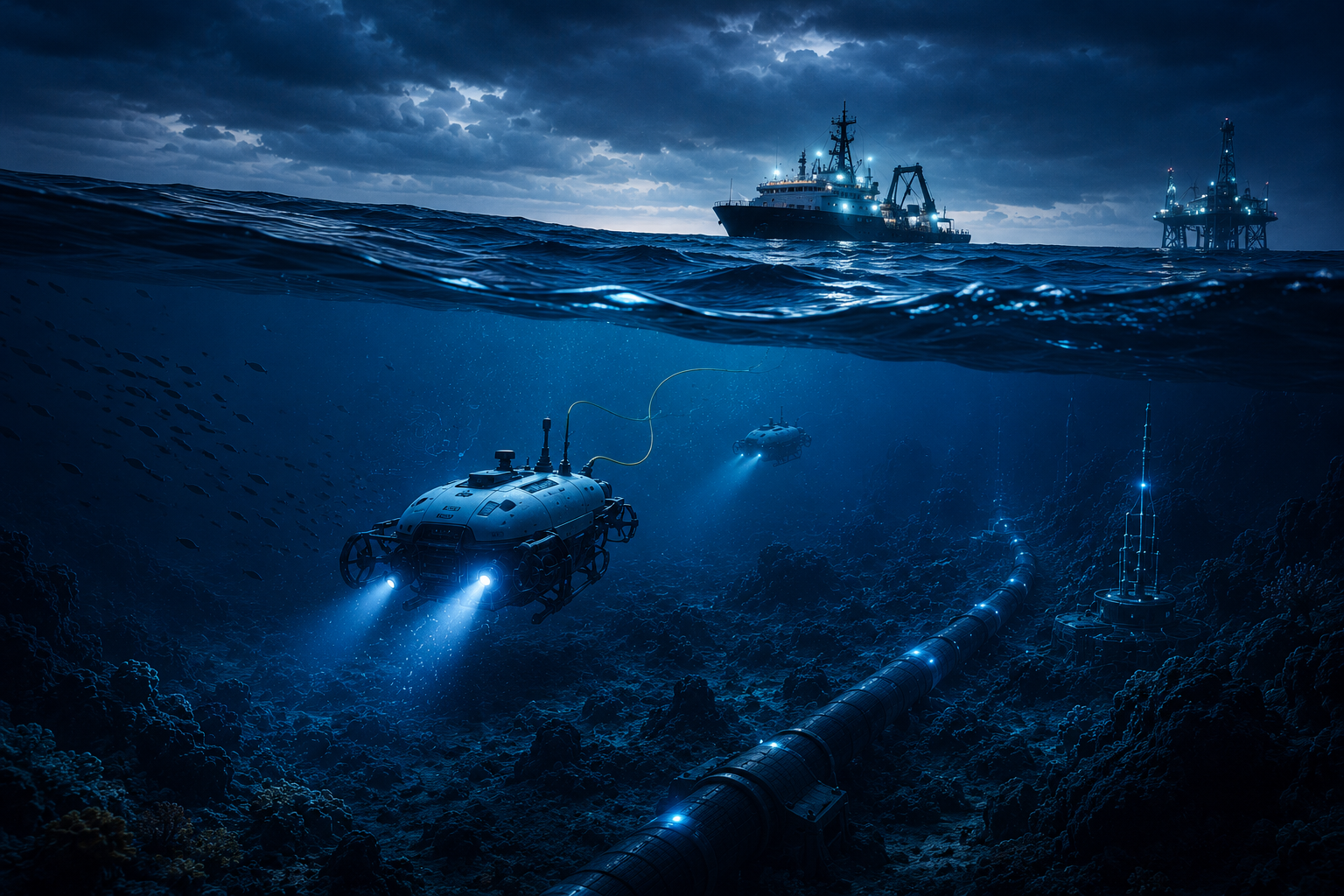

If domestic law is restrictive, international law is even more so. Cyber operations do not occur in a vacuum. They rely on physical infrastructure, including servers, cables data centres, located within sovereign territories. Interfering with that infrastructure, even indirectly, can amount to a violation of another state’s sovereignty.

The Tallinn Manual 2.0, while not legally binding, provides a widely accepted framework for interpreting how international law applies to cyber operations. It makes clear that cyber activities crossing borders must respect principles such as sovereignty and non-intervention. Under this framework, most forms of active cyber defense that extend beyond national borders are legally problematic.

There are limited exceptions, such as countermeasures taken by states in response to internationally wrongful acts, but these are tightly constrained. They require clear attribution, proportionality and a specific objective: to induce the offending state to cease its conduct. Crucially, these doctrines apply to states, not private actors. A private company cannot unilaterally invoke the right to take countermeasures. Unless acting under explicit state authority, its actions remain subject to ordinary legal prohibitions. This creates a significant gap. While threats are global, the authority to respond remains largely national, and tightly controlled.

A Shift in Practice: Japan’s Active Cyber Defense Model

While many countries remain cautious, some are beginning to rethink this balance. Japan offers a notable example. Recent legislative reforms have fundamentally expanded the country’s approach to cybersecurity. Rather than limiting itself to detection and response within domestic networks, Japan has moved toward a more proactive model.

Government agencies are now authorised, under certain conditions, to access and disable infrastructure used to launch cyberattacks; even when that infrastructure is located abroad. This represents a significant shift. It acknowledges that effective defense may require action beyond national borders, and it formalises the role of the state in conducting such operations.

Equally important is the integration of the private sector. Critical infrastructure operators are now required to report incidents and cooperate with government-led cybersecurity efforts. Information sharing has been institutionalised and new mechanisms have been created to coordinate responses across public and private actors.

In effect, Japan’s model blurs the line between defense and pre-emption. It does not fully endorse private-sector hacking back, but it creates a framework in which proactive measures are coordinated, authorised, and embedded within national security strategy. This raises an important question: if more jurisdictions follow this path, how will these expanded powers be exercised in practice – especially as automation becomes central to cyber defense?

The Rise of Autonomous Cyber Defense

Modern cyber threats operate at machine speed. Attacks can unfold in milliseconds, far faster than human operators can respond. To keep pace, organisations are increasingly deploying autonomous systems, often referred to as Autonomous Intelligent Cyber-defense Agents (AICAs). These systems can detect anomalies, analyse threats and execute predefined responses without real-time human intervention.

Within internal networks, they are already highly effective. They can isolate compromised systems, block malicious traffic and adapt to evolving threats with minimal delay. The challenge arises when these capabilities are extended beyond defensive boundaries.

Some advanced systems are capable of what might be called “stealthy degradation” – subtle, targeted interference with malicious infrastructure. Rather than launching a visible counterattack, they infiltrate and disrupt the logic of an attacker’s tools, gradually reducing their effectiveness. Technically, this is sophisticated and appealing. Legally and ethically, it is far more complicated.

Attribution, Proportionality and the Limits of Automation

The central problem with autonomous active defense is not capability, it is judgment. Legal frameworks rely on concepts such as attribution and proportionality. Before taking action, a defender must be reasonably certain about who is responsible for an attack. Any response must be proportionate to the harm suffered and must avoid unnecessary collateral damage.

These are inherently human judgments. They require context, interpretation and an understanding of broader consequences. Autonomous systems, by contrast, operate on data and predefined rules. They can process vast amounts of information, but they do not “understand” geopolitical nuance.

If an algorithm misattributes an attack, it may trigger a response against the wrong target. If that target is located in another jurisdiction, or belongs to an innocent third party, the legal consequences could be severe. Collateral damage is another concern. Attackers frequently use civilian infrastructure as a staging ground. An automated response targeting that infrastructure could inadvertently disrupt essential services, from healthcare systems to financial networks.

There is also a deeper issue of accountability. If an autonomous system takes an action that violates the law, who is responsible? The developer? The operator? The organisation that deployed it? Existing legal frameworks are not well-equipped to answer these questions. For these reasons, there is a growing consensus that human oversight remains essential. Even in highly automated environments, there must be mechanisms to ensure that decisions align with legal and ethical standards.

Orchestration as a Legal Safeguard

As cyber defense becomes more proactive and more automated, the need for structured governance becomes critical. It is no longer sufficient to rely on individual tools or isolated systems. What is required is a coordinating layer that ensures all actions, especially autonomous ones, are consistent with legal, policy and operational constraints.

This is where orchestration comes into play. A robust orchestration system acts as a central decision-making framework. It integrates data from multiple sources, such as internal telemetry, external threat intelligence, behaviouralanalytics, and applies both machine learning and predefined policy rules to determine appropriate responses.

From a legal perspective, this serves several important functions. First, it strengthens attribution. By correlating multiple signals, orchestration systems can provide a more reliable basis for identifying threats. This reduces the risk of acting on incomplete or misleading information.

Second, it enforces boundaries. Even if an autonomous agent is capable of taking aggressive action, the orchestration layer can restrict when and how that action is permitted. For example, it can ensure that certain responses are only triggered under specific conditions, or that they are limited to particular jurisdictions.

Third, it enhances transparency. One of the key challenges in using AI-driven systems is the “black box” problem. If a system cannot explain its decisions, it becomes difficult to justify those decisions in a legal or regulatory context. Orchestration platforms that incorporate explainability features can provide detailed audit trails, showing how decisions were made and why specific actions were taken.

This is increasingly important as regulatory frameworks evolve. Standards such as the EU AI Act and various risk management frameworks emphasise accountability, traceability and human oversight. Organisations that cannot demonstrate these qualities may face significant legal exposure. In this sense, orchestration is not just a technical solution, but a form of legal risk management.

Conclusion: A New Equilibrium

Cybersecurity is entering a new phase. The limitations of passive defense are clear, and the pressure to adopt more proactive approaches is growing. At the same time, the legal and ethical constraints on such approaches remain significant.

The result is a delicate balancing act. On one side is the need for speed, adaptability and effectiveness in the face of increasingly sophisticated threats. On the other is the need to uphold legal principles such as sovereignty, proportionality and accountability.

ACD sits at the centre of this tension. It offers a way to close the gap between attackers and defenders, but it also introduces new risks, particularly when combined with autonomous technologies. The path forward is unlikely to involve a simple shift from prohibition to permission. Instead, it will require carefully designed frameworks that combine legal authority, technical capability and robust governance.

Orchestration, which is grounded in transparency, policy control and real-time intelligence, will be a key part of that framework. It provides a way to harness the power of automation without losing sight of legal and ethical constraints. Ultimately, the question is whether the systems guiding those actions will be sophisticated enough to ensure that, in the process of defending networks, they do not undermine the very legal and normative structures they are meant to protect.

Bibliography

1. Act on the Arrangement of Relevant Acts in Consequence of the Enforcement of the Act for the Prevention of Damage from Unauthorized Acts against Important Electronic Data Processing Systems 2025 (Japan)

2. Active Cyber Defense Certainty Act 2019, HR 3270, 116th Cong (US)

3. Computer Fraud and Abuse Act 1986, 18 USC s 1030 (US)

4. Couzigou I, ‘Hacking-Back by Private Companies and the Rule of Law’ (2020) 80 Zeitschrift für ausländischesöffentliches Recht und Völkerrecht 479

5. Kott A and others, ‘Autonomous Intelligent Cyber-Defense Agent (AICA) Reference Architecture, Release 2.0’ (Devcom Army Research Laboratory 2019)

6. Regulation (EU) 2024/1689 of the European Parliament and of the Council of 13 June 2024 laying down harmonisedrules on artificial intelligence OJ L

7. Schmitt MN (ed), Tallinn Manual 2.0 on the InternationalLaw Applicable to Cyber Operations (Cambridge University Press 2017)

8. Smeets M, ‘The Strategic Promise of Private Sector Active Cyber Defense’ (2021) 7(1) Cybersecurity 1